Kubernetes in DigitalOcean with Rancher

Published

Published

Introduction

Like I said in that post these two are a nice combination for self managed, cheap Kubernetes clusters. However someone asked me "what about DigitalOcean ?" (referral link, we both receive credits), because they don't want to host their clusters in Europe (Hetzner has data centers only in Germany and Finland) but in the US, and they also use DigitalOcean for something else already and are happy with them.

At first I mentioned DigitalOcean's managed Kubernetes service "DOKS" and suggested they try it. However I explained that I have tried DOKS three times and that each time I have had problems with the free control plane (timeouts, unavailability, various errors). Each time things like installing the Prometheus operator monitoring stack or multiple things at the same time with Pulumi would result in connectivity issues with the master, making the cluster literally unusable. All that support said was to add more or bigger nodes, which I didn't need.

DigitalOcean allocate a certain amount of resources to the master depending on the number and size of the worker nodes, and unfortunately this doesn't seem to work well with small clusters like mine. I had 3 nodes with 4 cores and 8GB of ram each and each time I had problems until I gave up and switched back to self managing K8s in Hetzner Cloud.

But... when this person asked me about DigitalOcean and Rancher, I remembered that Rancher has built in support for DigitalOcean, so it can create and manage nodes in DO directly using Docker machine under the hood. Like with the node driver for Hetzner Cloud I mentioned in the previous post, but without the limitations I explained there.

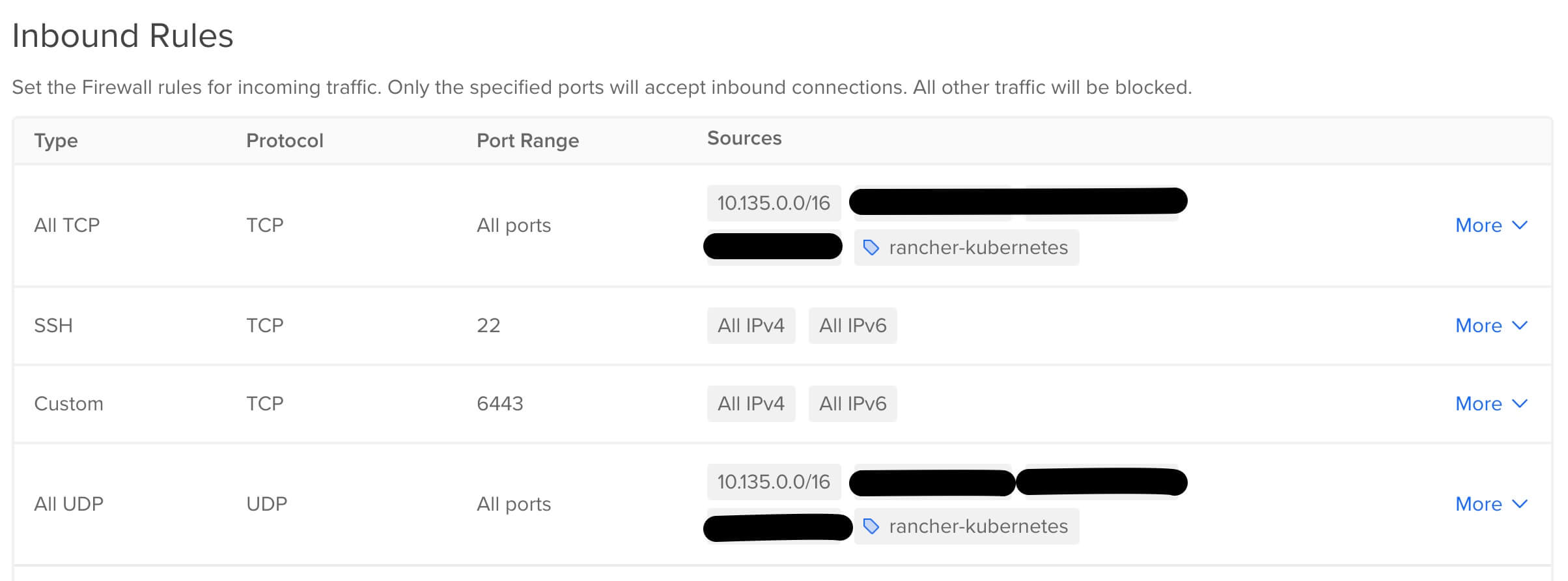

So this time I set up a cluster in DigitalOcean using Rancher's built in node driver, and it was easy and quick. Like I said in the previous post Rancher does not configure any firewall, but DigitalOcean has a very nice firewall feature that allows you to use tags to identify the resources a rule applies to. At the same time, Rancher allows you to add tags that will be applied to the nodes when configuring the node templates using the DigitalOcean node driver. So it very easy to configure the firewall directly in DO's control panel without having to do some configuration on the nodes either manually or with something like Ansible. The nice thing is that if I create new clusters, the firewall will automatically apply to these clusters too thanks to the tags.

I really like how easy it was to set up and that I can still mange node pools like with a managed service, which is not possible when deploying Kubernetes with the "custom nodes" setup I was using with Hetzner Cloud. At the moment my SaaS DynaBlogger is running in this DigitalOcean cluster, and I will keep this configuration for a week before deciding whether to stay or switch back to Hetzner Cloud since HC is quite a bit cheaper.

Setting up Rancher

Configuring the firewall

In the DigitalOcean control panel go to Networking > Firewalls, and click on "Create Firewall". Configure the inbound rules like in the picture. You want to allow all traffic

- within the VPC (you can find the range for your region under Networking > VPC - there should be a default VPC)

- from any resource with a tag you choose like "rancher-kubernetes"

- from the public IPs of any load balancers you will create later (so that they can talk to the nodes; it doesn't seem to be possible to add a tag to a load balancer provisioned from Kubernetes or anyway from the UI)

- from your IPs, so you can connect to the clusters

- from the IP of your Rancher instance. You are likely going to deploy Rancher to a droplet too, so in this case just add the tag "rancher-kubernetes" to that droplet too and the firewall will also apply to that one.

I love the use of tags to configure the firewall, because if we add nodes later we don't have to change anything. If you want to be able to access your Kubernetes API from anywhere, then also allow inbound traffic to the port 6443 from all IPs.

Configuring the node template

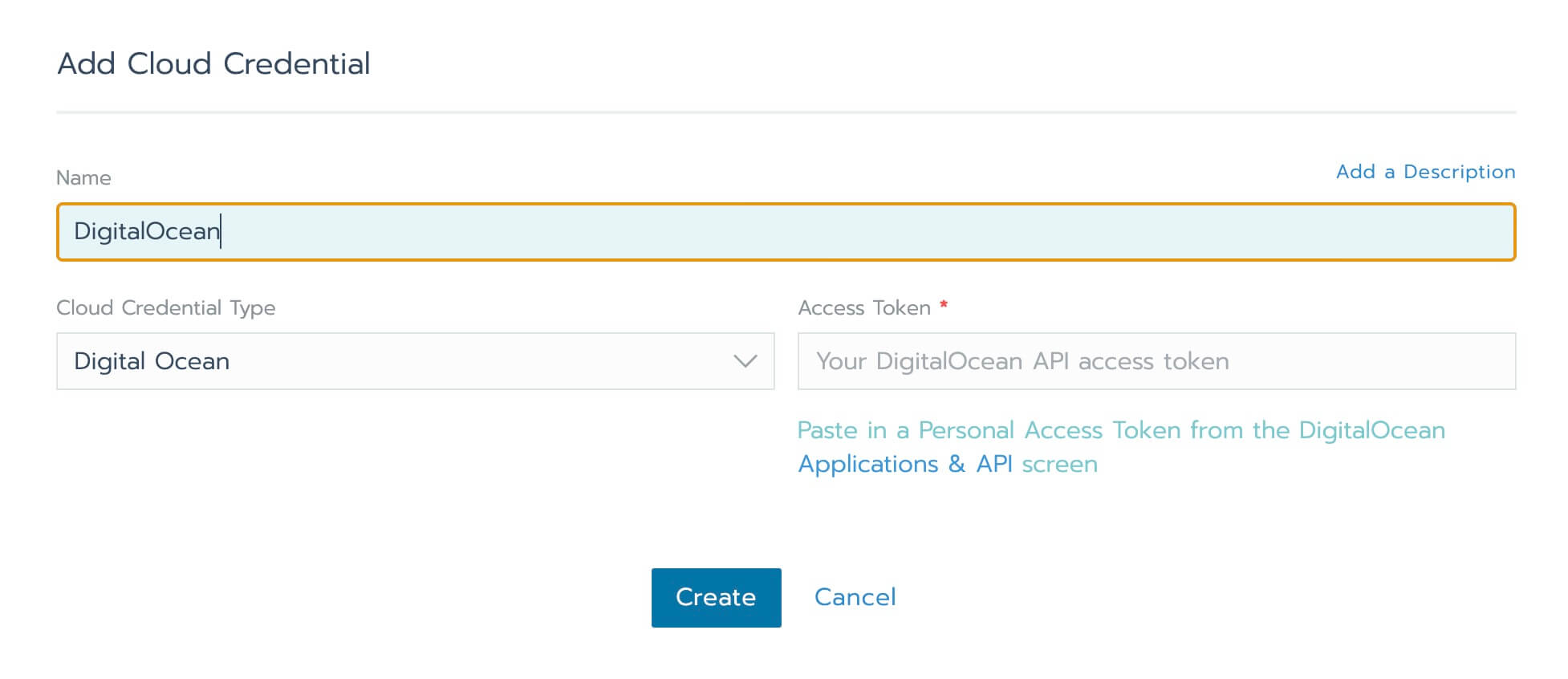

Next, go to Rancher and click on your avatar in the top right corner, then click "Cloud Credentials" and then on "Add Cloud Credential". Select DigitalOcean from the "Cloud Credential Type" dropdown and enter your token. Click on "Create" to confirm.

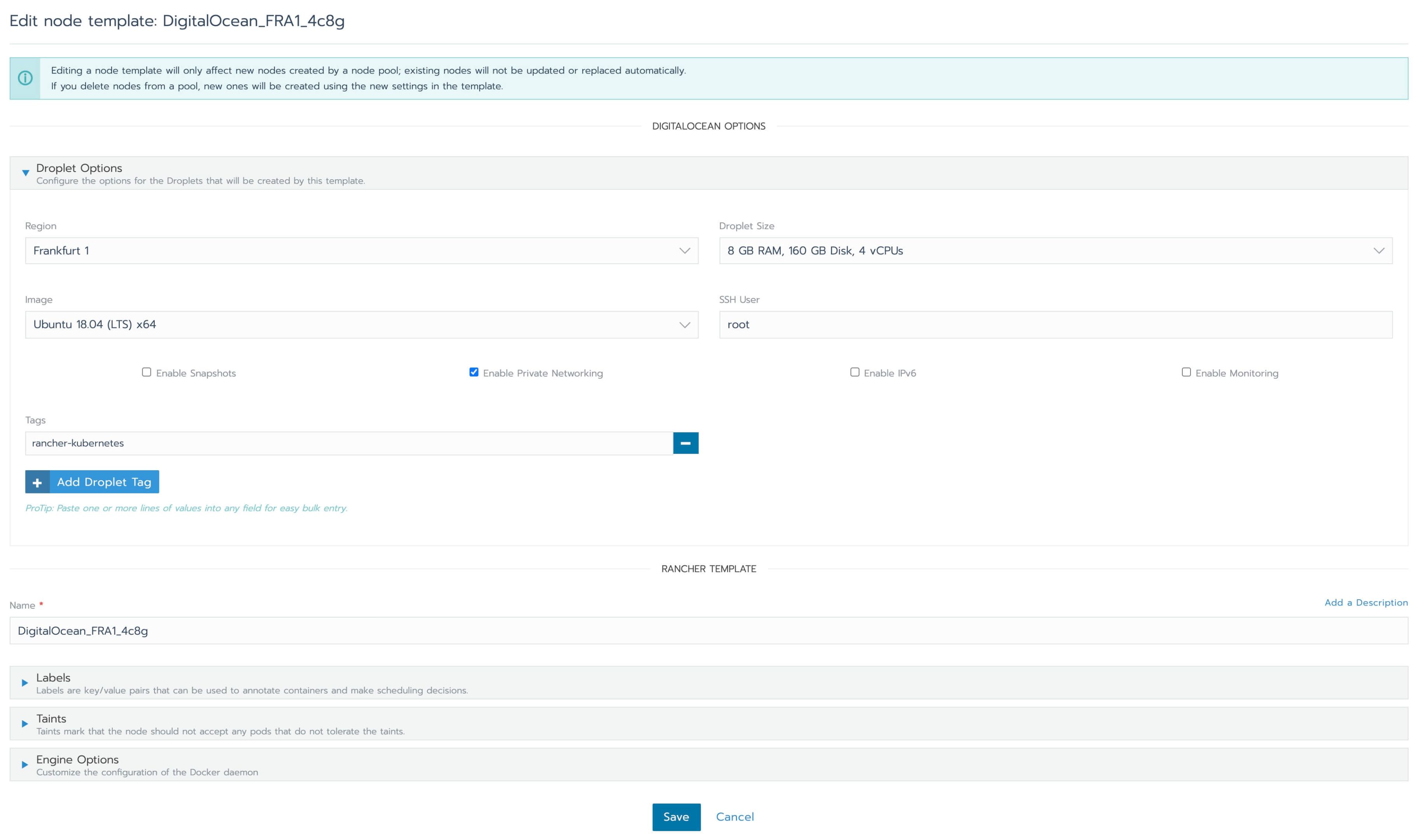

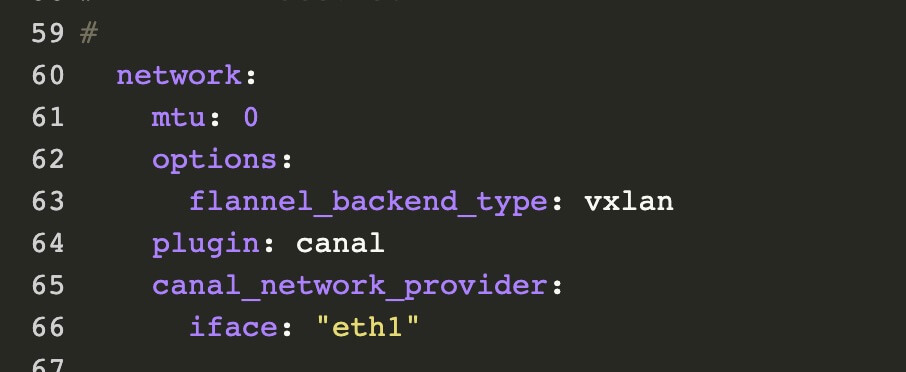

Next, click on "Manage Node Templates", select the cloud credential you just created, and in the next screen after clicking "Next" you can choose the region, and the instance type for this template. Choose Ubuntu 18.04 as the OS, and - important - add the same tag you have configured in the firewall earlier. I use "rancher-kubernetes" as tag for the nodes. Also make sure that you enable private networking; we'll configure the cluster so that the traffic between the nodes uses the private network. Give the template a name (I use the convention provider-region-number_of_cores-ram) and save:

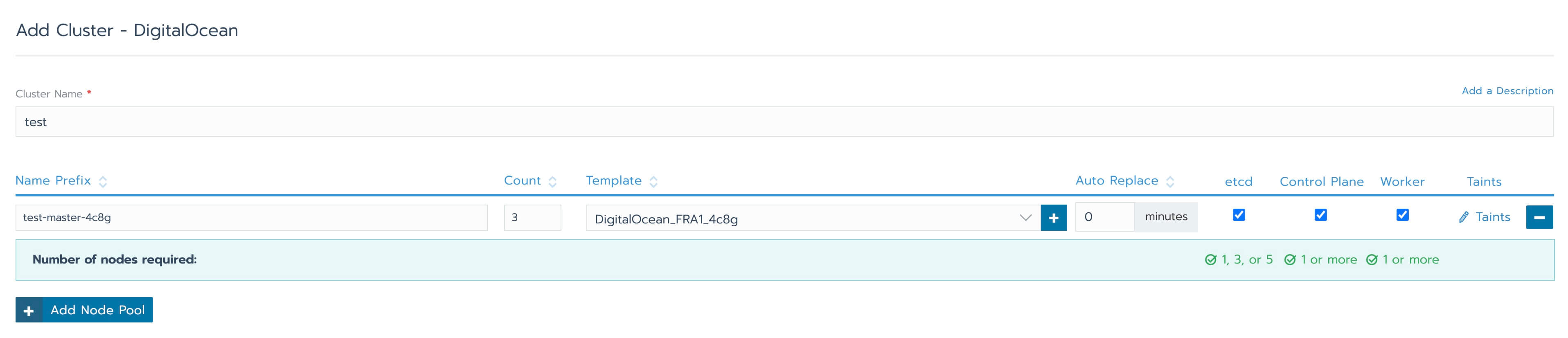

Creating the cluster

Give the cluster a name, and for the first node pool give the pool a prefix for the name, for example cluster_name-role-cores_ram, set the count to 3 to create a HA cluster, then select the node template you have created earlier and select the roles. For a simple HA cluster with just 3 nodes select all the roles like in the picture:

Now under Advanced Options disable Nginx ingress, because you are likely going to install the ingress controller with a load balancer provisioned in DO, using their cloud controller manager - which we'll install later.

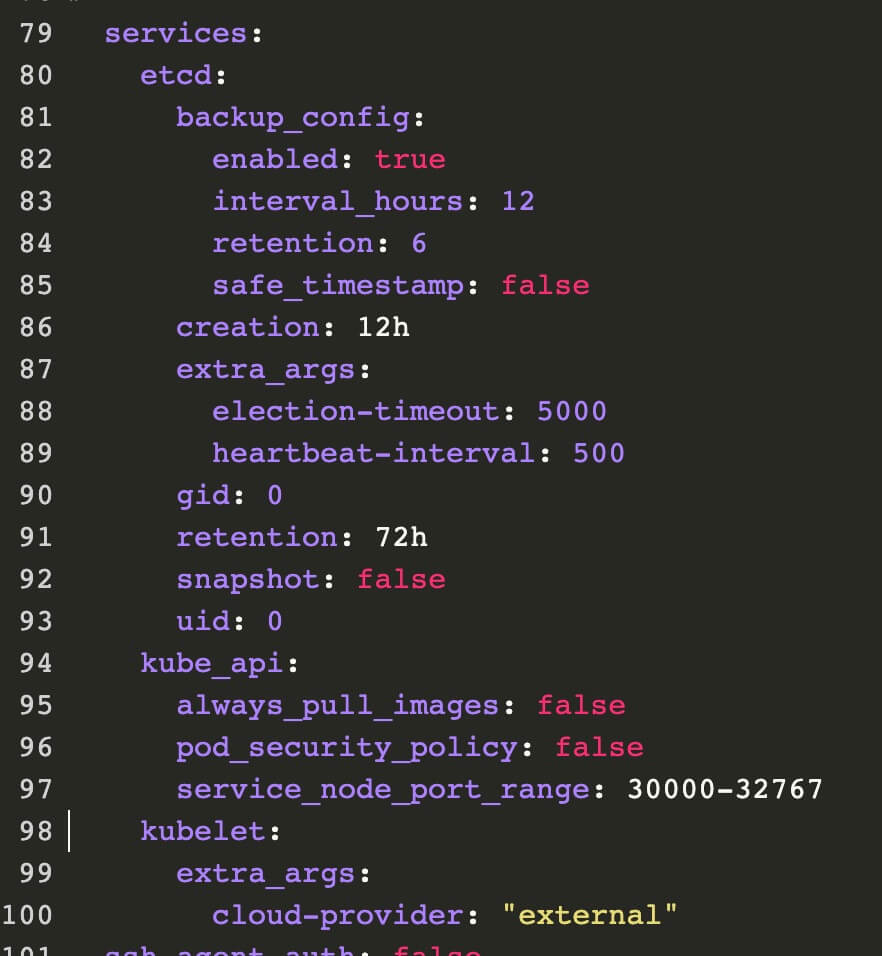

I also recommend you enable etcd snapshotting to S3 compatible storage so you can restore the cluster to a previous state if you screw it up.

Also, under Services configure the Kubelet so that it has cloud provider set to "external" which is required for the cloud controller manager:

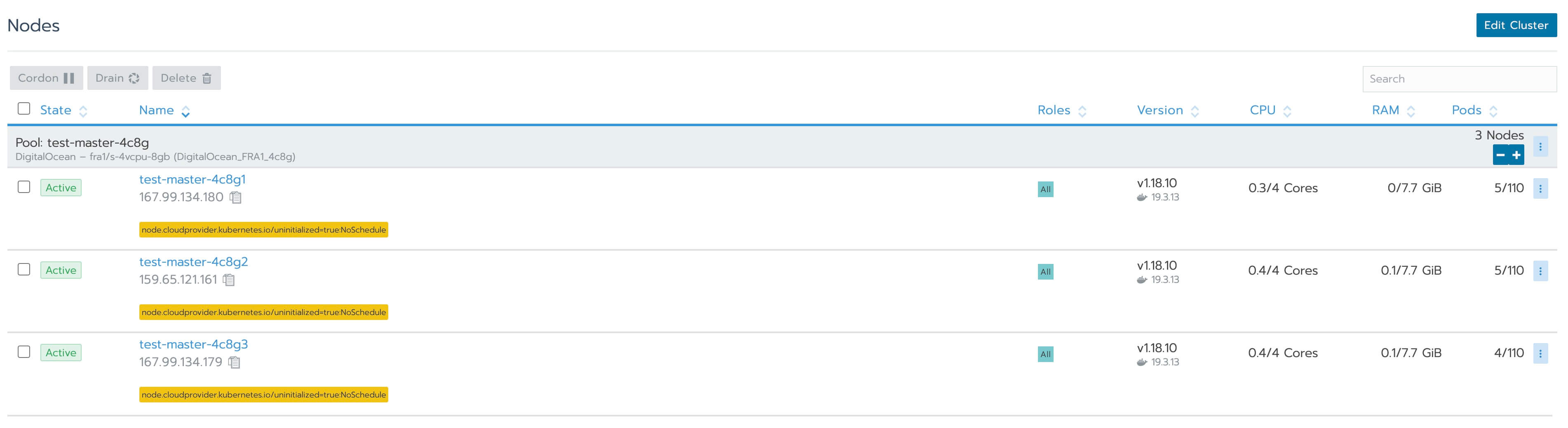

Finally, click on "Create", then go to the "Nodes" tab of the newly created cluster and wait for all the nodes to be ready and the provisioning messages to be gone. The nodes will initially have a taint to disable scheduling, which will be fixed automatically once we install the cloud controller manager.

Rancher will now connect to DigitalOcean, create the nodes and deploy Kubernetes for you. For me this takes around 7-8 minutes.

When the provisioning is complete the UI will look like in the picture:

Configuring access to the cluster with kubectl

Cloud controller manager

First, create a secret in the kube-system namespace with the DigitalOcean token you created earlier:

apiVersion: v1 kind: Secret metadata: name: digitalocean namespace: kube-system stringData: access-token: "your token"

Then deploy the cloud controller manager:

kubectl apply -f https://raw.githubusercontent.com/digitalocean/digitalocean-cloud-controller-manager/master/releases/v0.1.30.yml

Ensure you install the latest version. This should be pretty quick and once installed, the nodes will become schedulable and you will be able to create services of type LoadBalancer.

CSI driver for the block storage

You already have the secret for the token in kube-system, so all you need to do now is install the manifests:

kubectl apply -f https://raw.githubusercontent.com/digitalocean/csi-digitalocean/master/deploy/kubernetes/releases/csi-digitalocean-v2.1.1/crds.yaml kubectl apply -f https://raw.githubusercontent.com/digitalocean/csi-digitalocean/master/deploy/kubernetes/releases/csi-digitalocean-v2.1.1/driver.yaml kubectl apply -f https://raw.githubusercontent.com/digitalocean/csi-digitalocean/master/deploy/kubernetes/releases/csi-digitalocean-v2.1.1/snapshot-controller.yaml

Make sure you specify the correct version of the driver for the version of Kubernetes of your cluster, which you can find in the README. For 1.18/1.19 at the time of this writing you can install v2.1.1. Note that the README shows a single kubectl apply command, but for some reason I got some error when installing it that way while it worked without any issues when installing one manifest per time.

Pro tip: I recommend you "watch releases" for both repos so you know when to upgrade.

Conclusion

So there you have it, if you are interested in both Rancher and DigitalOcean. Let me know in the comments if this works for you or what you think about it. I would also be curious to hear if you have tried DigitalOcean's managed Kubernetes and what your experience with it was/is like.

I am passionate about WebDev, DevOps and CyberSecurity. I am based in Espoo, Finland, where I work with the backend team at

I am passionate about WebDev, DevOps and CyberSecurity. I am based in Espoo, Finland, where I work with the backend team at